In the realm of technology, there are pivotal choices that can make or break the performance of your machine learning endeavors. While algorithms, data, and software undoubtedly play crucial roles, it’s often the hardware that can unleash the true potential of your artificial intelligence and deep learning projects. Enter the Graphics Processing Unit, or GPU, a silent hero in the world of machine learning, capable of transforming complex mathematical calculations into actionable insights with remarkable speed.

In this comprehensive guide, we embark on a journey to uncover the best GPU for your machine learning custom PC.

Image Credit: TheMVP

Growing importance of GPUs in machine learning

Machine learning is a subfield of artificial intelligence (AI) that focuses on the development of algorithms and statistical models that enable computer systems to learn and improve their performance on a specific task or problem without being explicitly programmed. In other words, machine learning allows computers to learn from data and make predictions or decisions based on that learning.

Nowhere is this more evident than in the realm of machine learning, where the transformative power of Graphics Processing Units (GPUs) has reshaped the very fabric of AI-driven innovation. The right GPU can be the key to unlocking the full potential of your machine learning projects, propelling you beyond the competition and into the realm of groundbreaking discoveries. However, the wrong GPU can stifle your progress, leading to frustration and missed opportunities.

The importance of Graphics Processing Units (GPUs) in machine learning has grown significantly over the years and continues to do so due to several key factors:

Parallel Processing Power

GPUs are designed to handle parallel tasks efficiently. Machine learning involves performing numerous matrix multiplications and mathematical operations simultaneously, making GPUs ideal for accelerating training and inference processes. This parallelism significantly speeds up model development and deployment.

Deep Learning Revolution

Deep learning, a subset of machine learning that uses neural networks with many layers, has achieved remarkable success in various applications, including image and speech recognition. Training deep neural networks requires substantial computational power, and GPUs are uniquely suited to handle the immense matrix computations involved.

Large Datasets

Machine learning models thrive on large datasets. GPUs enable faster data processing and analysis, allowing practitioners to work with massive datasets without prohibitive time constraints. This is particularly crucial in domains like natural language processing and computer vision.

Real-time Inference

Many machine learning applications, such as autonomous vehicles and real-time fraud detection, require rapid decision-making. GPUs provide the necessary computational speed to perform inference on models in real time, ensuring timely responses to changing conditions.

Cost-efficiency

GPUs offer an attractive cost-to-performance ratio. They deliver significant computational power for machine learning tasks compared to central processing units (CPUs) at a lower cost. This cost-effectiveness has made machine learning more accessible to startups, researchers, and small enterprises.

Cloud Computing

Cloud service providers offer GPU instances, allowing users to access powerful GPUs on-demand. This democratizes machine learning by eliminating the need for substantial upfront hardware investments, making GPU resources accessible to a broader audience.

AI Hardware Innovations

Hardware companies have recognized the importance of GPUs in AI and machine learning. They are developing specialized GPU architectures, such as NVIDIA’s Volta and Ampere, with dedicated tensor cores for deep learning workloads, further enhancing their capabilities.

Scientific Research

GPUs are not limited to machine learning; they are extensively used in scientific research, simulations, and data analysis. This cross-pollination of technology accelerates innovation in both machine learning and scientific computing.

Advancements in AI

AI and machine learning are constantly evolving fields. As models become more complex and demand more computational resources, GPUs remain at the forefront, adapting to handle the latest breakthroughs and applications.

Understanding Machine Learning GPU Requirements

Image Credit: Exxactcorp

GPUs are designed to handle multiple computations simultaneously, which is critical for machine learning tasks that involve large datasets and complex mathematical operations. Many machine learning algorithms, particularly in deep learning, rely heavily on matrix operations. GPUs excel at performing these operations efficiently. GPUs can also significantly reduce training times for machine learning models, making experimentation and model iteration more accessible and efficient.

Additionally, GPUs can be scaled up by using multiple GPUs in parallel, which is essential for training large models on vast datasets.

Factors to consider when choosing a GPU for a machine learning custom PC

Compute Power:

- CUDA Cores: The number of CUDA cores determines the GPU’s parallel processing capability. More cores typically mean higher compute power.

- Clock Speed: A higher clock speed can improve the GPU’s performance in certain tasks.

Memory Capacity:

- VRAM (Video RAM): Larger VRAM allows you to work with larger datasets and more complex models without running out of memory.

- HBM (High Bandwidth Memory): HBM offers faster memory access, which is crucial for data-intensive tasks.

Tensor Cores and AI-specific Features:

- Tensor Cores: These specialized cores, found in some GPUs like NVIDIA’s Volta and Ampere architectures, accelerate matrix operations commonly used in deep learning.

- AI-specific Hardware: GPUs may have dedicated hardware for AI tasks, such as hardware for ray tracing in AI rendering applications.

Compatibility with Deep Learning Frameworks:

- Ensure that the GPU is supported by popular deep learning frameworks like TensorFlow, PyTorch, and Keras.

- Check for optimized libraries and drivers that enhance GPU performance with these frameworks.

Single vs. Multi-GPU Setup:

- Decide whether a single GPU or multiple GPUs in a parallel setup (SLI or NVLink) best suits your machine learning workloads.

Power Consumption and Cooling:

- Consider the GPU’s power requirements and ensure that your system’s power supply and cooling solution can handle it.

Budget Constraints:

- Balance your GPU choice with your budget, as high-end GPUs can be costly. Consider options that provide the best value for your specific machine learning tasks.

Future-Proofing:

- Anticipate the future needs of your machine learning projects and choose a GPU that can handle upcoming challenges and advancements in the field.

Compatibility with Other Hardware:

- Ensure compatibility with other components of your custom PC, such as the motherboard, power supply, and cooling solution.

The Top GPUs for a Machine Learning Custom PC

-

Gigabyte GeForce RTX™ 3090 Ti GAMING OC 24G$2,507.00 w/GST

Gigabyte GeForce RTX™ 3090 Ti GAMING OC 24G$2,507.00 w/GST -

ASUS TUF-RTX4090-O24G-GAMING (3Y)$3,899.00 w/GST

ASUS TUF-RTX4090-O24G-GAMING (3Y)$3,899.00 w/GST

1. NVIDIA GeForce RTX Series

Most models within the NVIDIA RTX Series, notably the RTX 40 series, have gained significant attention and popularity within the machine learning community due to their exceptional computational capabilities and efficiency.

The RTX 3090 Ti graphics card boasts 10 752 CUDA cores, but the RTX 4090 takes it several notches higher with an impressive 16,000 plus CUDA cores. This significant improvement is expected to result in a more exceptional performance level for the RTX 4090.

The GeForce RTX 4090 is a great card for deep learning. It is not only significantly faster than the previous generation flagship consumer GPU, the GeForce RTX 3090, but also more cost-effective in terms of training throughput/$.

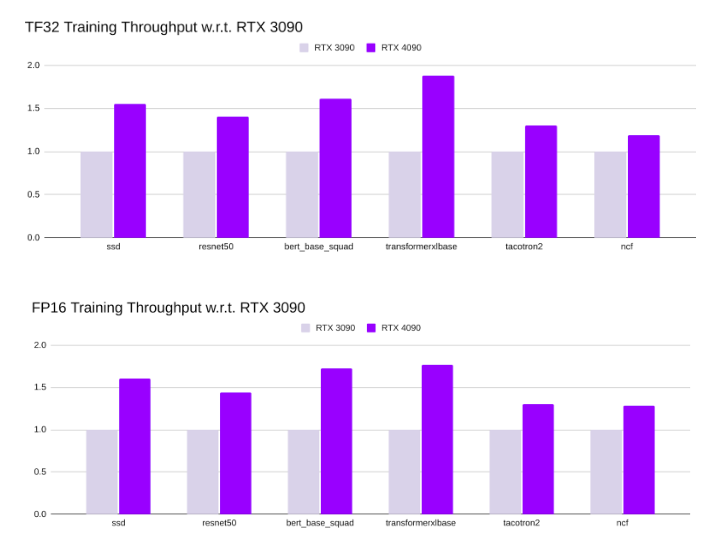

Research Credit: Lambdalabs

Throughput is a measurement in Machine Learning to determine the performance of various models for a specific application. In a PyTorch Training Throughput, RTX 4090 has significantly higher training throughput. Depending on the model, its TF32 training throughput is between 1.3x to 1.9x higher than RTX 3090.

2. NVIDIA Quadro Series

The NVIDIA Quadro Series is a family of professional-grade GPUs specifically designed for use in workstations. Unlike the GeForce series, which is tailored primarily for gaming, the Quadro GPUs are optimized for a wide range of professional applications. These GPUs are renowned for their reliability, precision, and performance, making them a top choice for professionals in fields like computer-aided design (CAD), 3D rendering, scientific simulations, and machine learning.

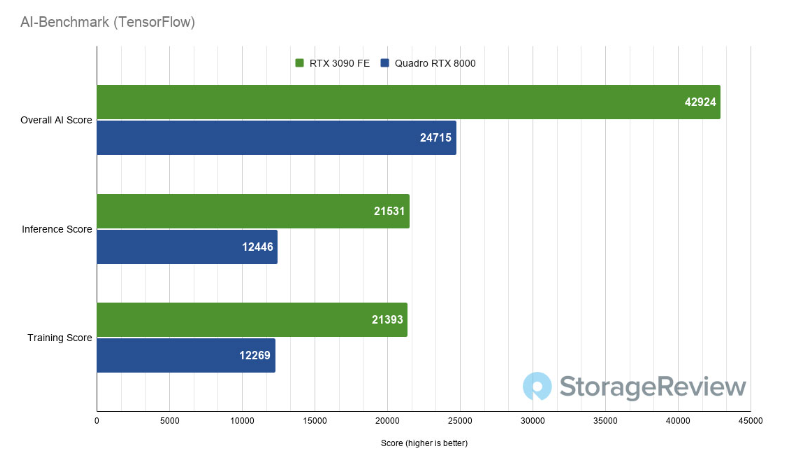

We find that the RTX 4090 has 17% better value for money than RTX 8000. We’ll be taking a look at the machine learning performance of these cards using a benchmark aptly named AI-Benchmark. It’s an open-source Python library that runs a series of deep learning tests using the TensorFlow machine learning library.

Research Credit: StorageReview

However, Quadro cards are far superior in terms of editing and productivity compared to the RTX 3090 because of their different drivers. These drivers are why Quadro cards can be multiple times faster than GTX and RTX cards in certain workloads. They are also built slightly differently and are designed to have higher heat tolerances.

Building the Ultimate Custom PC for Machine Learning

Image Credit: TheSmartLocal

The heart of any machine learning custom PC is the Central Processing Unit (CPU). While the GPU plays a starring role in accelerating specific tasks, the CPU remains a crucial component for a well-balanced machine learning custom PC.

Machine learning projects often begin with extensive data preprocessing. This includes tasks like cleaning, formatting, and transforming data before feeding it into your models. The CPU plays a pivotal role in these operations, ensuring your data is ready for training.

While GPUs shine during the training phase, many machine learning applications require models to make predictions in real-time. Here, the CPU’s ability to quickly process these predictions is essential, especially in applications like autonomous vehicles and robotics.

Some machine learning workloads are inherently CPU-bound, meaning they rely heavily on the CPU’s processing power. These can include tasks like natural language processing and complex algorithmic computations. A robust CPU ensures these operations run smoothly.

When selecting a CPU for your machine learning custom PC, consider these key factors:

a. Clock Speed: Higher clock speeds translate to faster processing of individual tasks. Look for CPUs with high base and turbo clock speeds, as they can provide a significant boost in performance.

b. Core Count: Machine learning libraries and frameworks often take advantage of multi-core CPUs. More cores allow for parallel processing, speeding up tasks that can be divided among multiple cores.

c. Compatibility with GPU(s): Ensure that your chosen CPU is compatible with the GPU(s) you plan to use. This includes checking the motherboard’s compatibility for GPU slots and configurations. AMD Ryzen and Intel Core processors are popular choices, with varying performance levels to suit your needs.

Motherboard Considerations

The motherboard serves as the backbone of your machine learning custom PC, impacting performance, compatibility, and expandability.

The motherboard determines how components communicate with each other. A high-quality motherboard with efficient data pathways ensures that your CPU, GPU(s), RAM, and storage work together seamlessly.

As your machine learning needs grow, you may want to add more GPUs, RAM, or storage. Select a motherboard with sufficient PCIe slots, RAM slots, and storage connectors to accommodate future upgrades.

Ensure the motherboard is compatible with your chosen CPU and GPU(s). Check for the correct CPU socket type (e.g., LGA 1200 for Intel, AM4 for AMD) and ensure it supports the GPU slot(s) required for your configuration.

Machine learning often involves working with large datasets. You should opt for a motherboard that supports fast storage solutions like NVMe SSDs. These can significantly reduce data loading times and improve overall system responsiveness.

Selecting the Optimal Amount and Type of RAM

Random Access Memory (RAM) is a fundamental component of any computer, and in the context of machine learning, its role is paramount. RAM serves as a high-speed, temporary storage location for data that the CPU and GPU(s) need to access quickly.

Machine learning models, especially deep neural networks, require extensive computational resources during training. RAM plays a crucial role in this process by holding the model parameters, intermediate results, and mini-batches of data. A larger RAM capacity allows for the storage of more data in memory, reducing the need to read data from slower storage devices (e.g., hard drives or SSDs). This, in turn, accelerates model training by minimizing data loading times.

Effect of RAM Capacity on Working with Complex Models and Batch Processing

Complex machine learning models, such as deep convolutional neural networks or recurrent neural networks, often require substantial amounts of RAM. A generous RAM capacity is essential for comfortably accommodating these models.

Batch processing, a common training technique, involves processing subsets (batches) of the training dataset in each iteration. A larger RAM allows for larger batch sizes, which can lead to more stable and efficient model convergence.

Different Types of RAM (e.g., DDR4, DDR5)

RAM technology evolves over time, with DDR4 and DDR5 being two prevalent types in recent years. DDR4 is widely used and offers good performance for most machine learning tasks. DDR5, while faster, is more cutting-edge and may require a compatible CPU and motherboard.

Impact of RAM Speed and Latency

RAM speed, measured in megahertz (MHz), determines how quickly data can be read from or written to RAM. Higher speed RAM generally leads to better performance in memory-bound tasks. Latency, measured in nanoseconds (ns), is the delay between requesting data and receiving it from RAM. Lower latency is favorable for tasks where quick access to data is critical. Memory-intensive tasks, such as large-scale data processing and simulations, benefit the most from high-speed RAM and low latency.

Benefits of Error-Correcting Code (ECC) RAM

ECC RAM includes additional error-checking bits that allow it to detect and correct single-bit errors. This feature ensures data integrity, preventing potential corruption of critical data during machine learning tasks. For professional machine learning tasks, ECC RAM is especially valuable because it reduces the risk of undetected errors that could compromise research results, data accuracy, and model reliability.

Recommended Scenarios for ECC RAM

ECC RAM is highly recommended for researchers, professionals, and organizations involved in critical machine learning applications, particularly in scientific research, healthcare, finance, and autonomous systems.

Tasks where data integrity is non-negotiable, such as medical image analysis, climate modeling, or autonomous vehicle development, should prioritize ECC RAM to maintain the highest level of reliability.

Future proofing your custom PC

Building a custom PC for machine learning is not just about meeting today’s needs but also preparing for tomorrow’s challenges.

Choose components and a motherboard that allow for easy upgrades. This includes selecting a motherboard with extra PCIe slots for additional GPUs and ensuring compatibility with future CPU generations. Keep an eye on emerging AI hardware innovations. In the rapidly evolving field of AI, specialized hardware like AI accelerators may become essential for certain tasks.

Follow developments in machine learning hardware and software. Be ready to adapt to new techniques, frameworks, and tools that can enhance your machine learning capabilities.

Build a custom PC with a modular design, making it easy to swap out components such as GPUs, RAM, and storage as your needs change.

Conclusion

Selecting the right Graphics Processing Unit (GPU) for machine learning is the cornerstone of your custom PC. GPUs excel in parallel computing, making them indispensable for accelerating machine learning tasks. They also play a pivotal role in training deep neural networks, driving advancements in AI research. Modern GPUs balance high performance with energy efficiency, reducing environmental impact.

Building a machine learning PC in Singapore involves several critical decisions including choosing the rest if your custom PC’s components, while also ensuring compatibility, which influences overall performance and expandability. With the right hardware configuration and a passion for learning, you’re well on your way to unleashing the full potential of machine learning, whether you’re a researcher, data scientist, or AI enthusiast.